Raven (Part-2)

Architecture and Results

From Framework to Model

In Part 1, we introduced Routing Slot Memories (RSM), a framework that combines the selective writing of SWA with the gradual forgetting of SSMs. The next step was to turn that framework into a concrete model.

RSM is deliberately general. To instantiate Raven, one needs to choose how the router $r_t$ is computed and how the decay term $a_t$ is parameterized. We wanted a design expressive enough to learn meaningful slot assignments, but simple enough to train stably at scale.

Our recurrence uses separate key and value states, mirroring SWA, but replaces hard deletion with learned decay:

Unlike standard SSMs

For routing, we draw inspiration from the DeepSeek Mixture-of-Experts (MoE) family

By isolating only the highest-scoring slots, the memory dynamically specializes based on the content of the token. Finally, to keep the effective write magnitude stable, these gated scores are normalized into the final routing weights:

Here, $\alpha$ controls the effective write scale, similarly to the role of temperature-like scaling in GLA

Design Decisions

With the recurrence fixed, we turned to the block design.

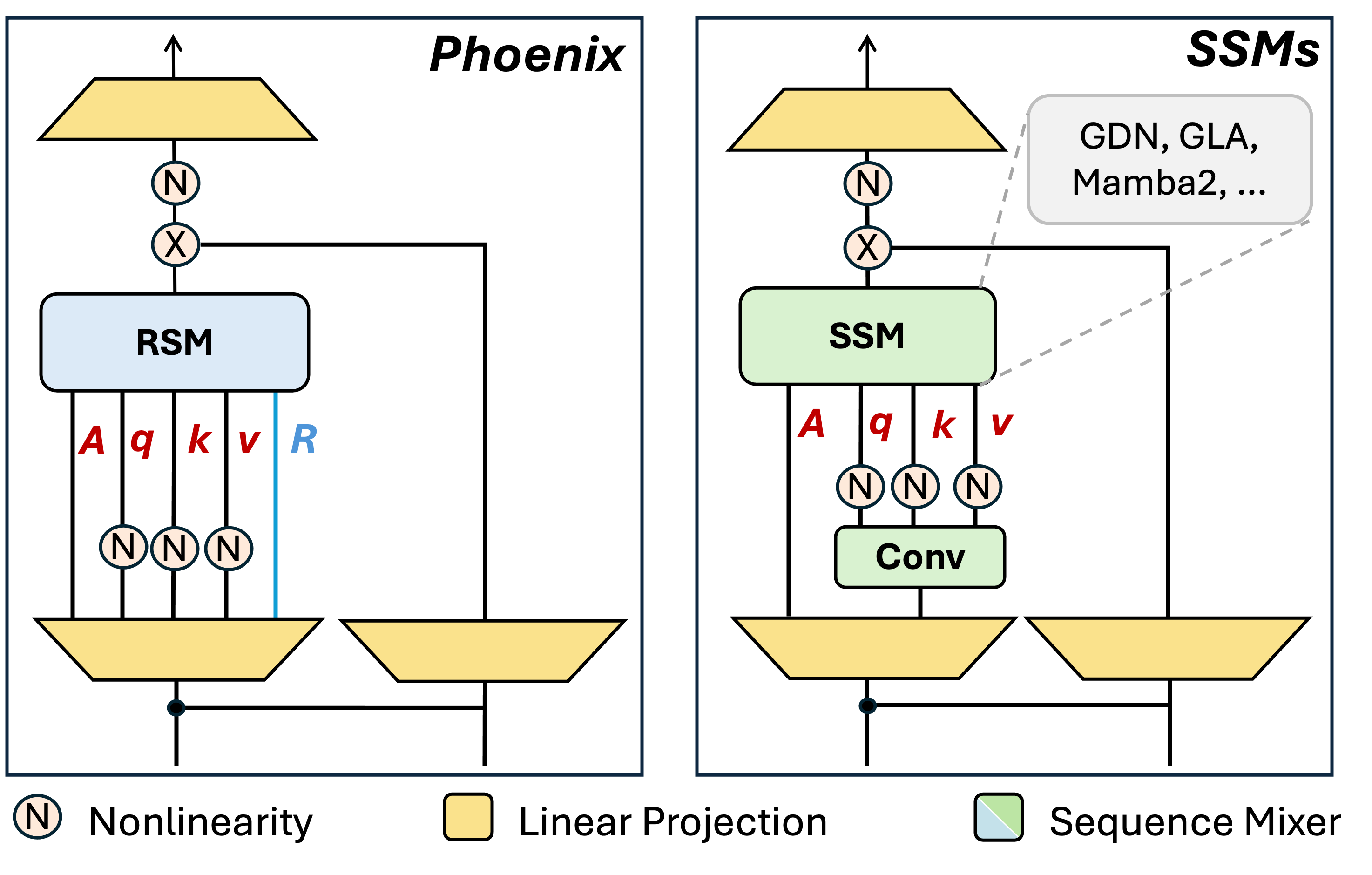

Dropping the short convolution. Most linear transformer blocks include a short depthwise convolution before the recurrence — a holdover that helps capture local context. We removed it. The reasoning: SWA is mathematically equivalent to an input-dependent convolution, and Raven’s RSM already subsumes SWA as a special case. Adding a separate convolution on top would be redundant.

On norms. Following common practice in both Transformers and linear attention/SSMs, we apply QK-RMSNorm to queries and keys to stabilize training and avoid gradient explosions, following common practice in SSMs. One interesting exception appears in hybrid Raven, where Raven layers are interleaved with attention. In that setting, removing these norms improves length generalization, especially on recall tasks. We hypothesize that the norms suppress position-related information that the NoPE attention layers rely on. This was one of the more unexpected ablation results.

Side Note on RoPE

In early experiments, we applied Rotary Position Embedding (RoPE)

No load balancing. In Mixture-of-Experts models, load-balancing losses are commonly used to spread tokens more evenly across experts. We do not use such a loss in Raven, since its memory is most useful when allocation is uneven. Slots that specialize in retrieval-critical tokens, such as passkeys, variable names, or rare facts, should receive more of those tokens rather than be pushed toward uniform usage. For this reason, we allow the router to specialize freely, and use Gumbel noise during training only to encourage exploration and avoid early collapse. This intuition is supported by our experiments: retrieval-critical tokens naturally cluster into dedicated slots, while other slots remain available for ordinary context.

Empirical Results

We now turn to the empirical evaluation. Our main question was whether Raven improves in-context retrieval without sacrificing general language modeling.

Retrieval Ability

We begin with retrieval. We compare Raven against Mamba-2

The baseline behavior is consistent with their memory mechanism: Mamba-2 and GDN write every token to every slot, so over a long sequence, each slot becomes a blurry average of everything it has ever seen. By 8K tokens — already $4\times$ their training length — the passkey signal is too diluted to recover.

Raven behaved differently. It held near-perfect accuracy ($\geq \mathbf{99\%}$) all the way to 16K tokens, and remained the only model at the 400M scale to keep strong performance ($> \mathbf{91\%}$) at 32K — that’s $\mathbf{16\times}$ its training length.

In contrast, Raven is dramatically better at NIAH-1 than all other models.

This behavior is consistent with the routing mechanism: the passkey can be written into a dedicated slot and remain recoverable over long spans, rather than being diluted by subsequent tokens.

Language Modeling

A natural question is whether this improvement in retrieval comes at the expense of general language modeling. We had deliberately made the memory uneven — would that hurt perplexity or zero-shot benchmarks?

Across standard evaluations, Raven matched or surpassed Mamba-2

Hybrid Raven

We also experimented with a hybrid variant — interleaving Raven RSM layers with standard attention layers. The results here were striking. The hybrid Raven achieved near-perfect NIAH-1 accuracy up to 32K tokens and strong NIAH-2 performance up to 16K tokens, while other SSM hybrids like GDN+Attn

In the 800M hybrid setting, Raven is the best model for in-context recall and length extrapolation — while GDN and SWA+RoPE drop to 0% accuracy beyond their training length, Raven retains around 80% accuracy on NIAH-2 at 64K tokens, which is 16× its training sequence length.

Length Generalization

One surprising outcome was Raven’s strong length generalization. During evaluation, we found that Raven remained effective far beyond its training length, beyond what had previously been observed in SSM-based models. This behavior was not explicitly designed for, which motivated us to look for a mechanistic explanation. In developing that explanation, we found it useful to think in terms of Effective Sequence Length (ESL) (ESL), a concept that came out of our discussion with Ricardo.

In a standard SSM, every slot receives every token, so the effective sequence length of each slot is $T$. In SWA, each slot receives exactly $\frac{T}{M}$ tokens, yielding uniform allocation across slots. In Raven, routing produces a more heterogeneous pattern: some slots receive many tokens, while others receive only a small subset, including rare retrieval-critical tokens. As a result, different slots operate over different effective sequence lengths, which may help explain Raven’s improved length generalization.

To make this concrete, we visualize Raven’s hidden state on a synthetic NIAH task:

The passkey — shown in $\color{red}{red}$ — is routed into a dedicated region of the hidden state and remains separated from most ordinary tokens. Ordinary tokens shown in $\color{green}{green}$ flow through the general-purpose slots without touching it. Some slots shown in $\color{blue}{blue}$ serve both roles. Crucially, different heads allocate different amounts of memory to retrieval-critical tokens — the model discovered this specialization entirely on its own, without any explicit supervision.

This is what “organizing memory like a closet” looks like from the inside.

Final Notes and Future

Raven began from a simple goal: improving recall in linear models. More broadly, it suggests that learned memory can benefit from structured, content-based allocation rather than uniform updates across slots. In Raven, a relatively simple routing mechanism is enough to improve recall while remaining competitive on language modeling, with the additional benefit of strong length generalization.

There is plenty left to explore. How far can the length generalization be pushed? Can the routing mechanism be made even more expressive? What happens at truly large scales? We don’t have all the answers yet — but we’re working on it.